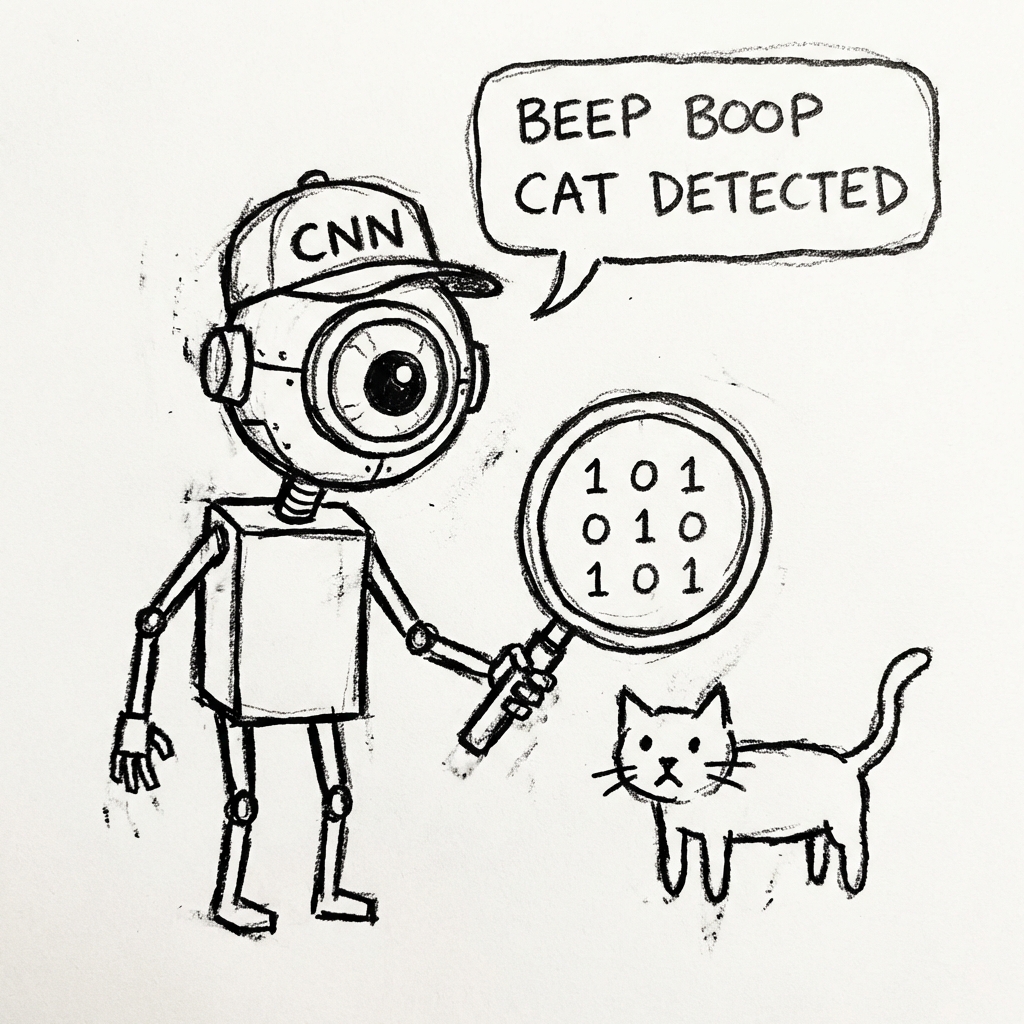

ML 9: The Eyeball (CNNs)

How do computers actually see images? CNNs explained in plain English with an interactive demo where you can pass an image through every layer yourself.

Seeing the World

Normal Neural Networks (MLPs) struggle with images. An image is just a grid of pixels. A 1000x1000 image has 1,000,000 inputs. If you feed all 1 Million pixels into a Dense Neural Network, it explodes. It's too much.

Enter the Convolutional Neural Network (CNN).

The Scanner

Instead of looking at the whole image at once, a CNN looks at small chunks. Imagine looking at a picture through a paper towel roll. You scan across.

-

Filters (Kernels): Small 3x3 grids that look for specific things.

- One filter looks for Vertical Lines.

- One filter looks for Horizontal Lines.

- One filter looks for Circles.

-

Pooling: Shrinking the image. "Okay, this area is generally dark."

-

Layers:

- Layer 1 sees Lines.

- Layer 2 combines lines to see Shapes (Eyes, Ears).

- Layer 3 combines shapes to see Objects (Cat Face).

How a CNN Sees

Watch filters transform real images

CNNs use many filters like these to detect features (edges, textures, shapes)

Feature Maps

A CNN doesn't "see" a cat. It sees:

- A map of where the fluffy texture is.

- A map of where the pointy ears are.

- A map of where the whiskers are.

If all those maps light up, it guesses "CAT".

The Code (Keras/TensorFlow)

from tensorflow.keras import layers, models

model = models.Sequential()

# 1. The Scanner (Conv2D)

# 32 filters, 3x3 size.

model.add(layers.Conv2D(32, (3, 3), activation='relu', input_shape=(28, 28, 1)))

# 2. The Shrinker (MaxPooling)

model.add(layers.MaxPooling2D((2, 2)))

# 3. Another Scanner

model.add(layers.Conv2D(64, (3, 3), activation='relu'))

# 4. Flatten and Decide (Standard Neural Net at the end)

model.add(layers.Flatten())

model.add(layers.Dense(10, activation='softmax'))Summary

CNNs revolutionized AI. Before them, Computer Vision was garbage. Now, your phone can unlock with your face, and your car can see stop signs (mostly).

Next up: What if the data is a sequence, like a sentence?