ML 7: The Blind Hiker (Gradient Descent)

Gradient descent is just a blind hiker walking downhill. Understand how every neural network on Earth learns, in 3 minutes, with zero calculus required.

🎮 Play with this: The Blind Hiker, Gradient Descent Playground. Drag the ball, change the function, and break things on purpose.

The Root of All Learning

How does a Neural Network actually "learn"? How does the Line in Linear Regression find the perfect angle?

It uses Gradient Descent.

The Mountain Analogy

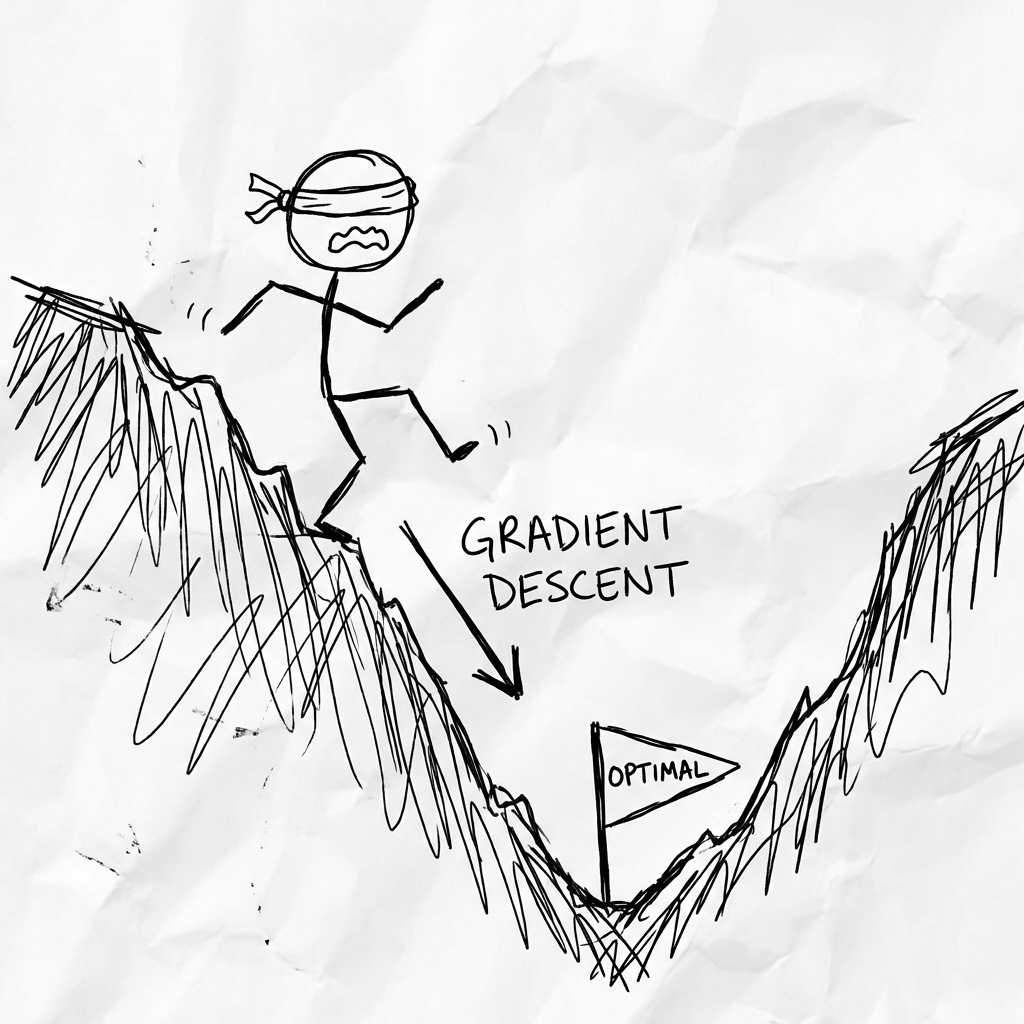

Imagine:

- You are on a mountain.

- You are blindfolded.

- You want to reach the bottom (the lowest error).

How do you do it? You feel the ground with your foot.

- If it slopes down to the left, you step left.

- If it slopes down to the right, you step right.

You take a small step (Learning Rate). Then you repeat. Step by step, you descend until you hit the valley.

The Blind Hiker

Drag the ball, change the function, break things on purpose.- x

- 20.00

- f(x)

- 36.00

- f'(x)

- -2.400

- step

- 0

The Learning Rate (Step Size)

- Too Big: You take a giant leap. You might jump over the valley and land on the other side. You bounce back and forth forever.

- Too Small: You take microscopic baby steps. It takes 10,000 years to reach the bottom.

- Just Right: Goldilocks.

The Math (Scary but simple)

The "Slope" is the Gradient (Derivative).

Cost Function C = How wrong your model is.

We want to minimize C.

New Weight = Old Weight - (Learning Rate * Slope)

If slope is positive, we go negative (downhill). If slope is negative, we go positive (downhill).

The Code (Concept)

Usually libraries handle this, but you can write it yourself:

weight = 0.5

learning_rate = 0.01

for step in range(100):

gradient = compute_derivative(weight) # Math magic

weight = weight - (learning_rate * gradient)Local Minima (The Trap)

Sometimes you hit a small valley (pothole) and think you are at the bottom. But the real bottom is miles away. This is a Local Minimum. Advanced optimizers (like Adam) have momentum, like a heavy ball rolling down, to help jump out of small potholes and keep going.

Summary

Gradient Descent is just trial and error with a sense of direction. It is the engine inside ChatGPT, AlphaGo, and pretty much everything else.

Next up: The student who studies too hard.