ML 6: The Mean Girls (K-Means Clustering)

Sorting things when you have absolutely no idea what they are.

Unsupervised Learning

So far, we've had a cheat sheet. We knew which dots were "Dogs" and which were "Wolves". This is called Supervised Learning.

But what if you just have a bucket of Legos? No labels. No instructions. Just chaotic plastic.

You start grouping them yourself:

- "These are red."

- "These are blue."

- "These are weird tiny heads."

This is Clustering. And the most famous algorithm is K-Means.

How K-Means Works

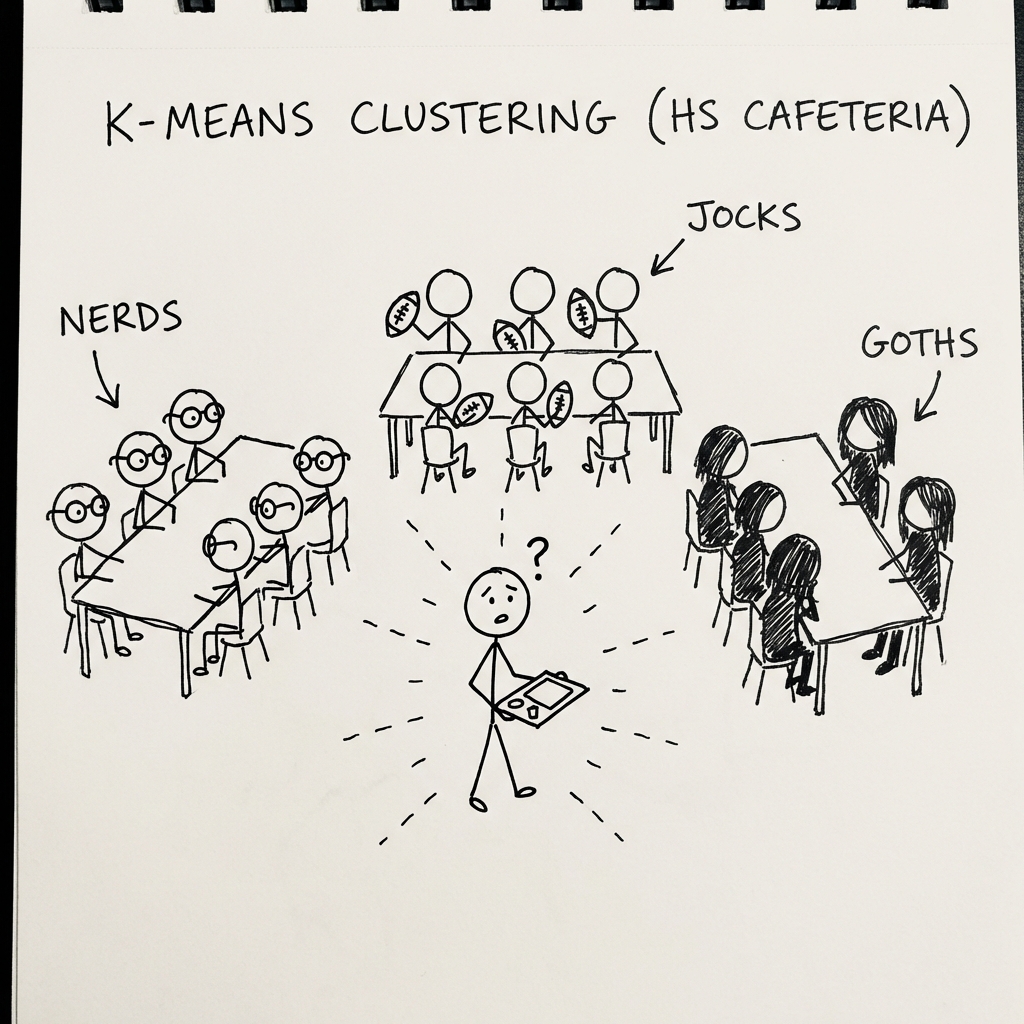

Imagine a high school cafeteria.

- Selection: You pick

Krandom tables (centroids). Let's say K=3 (Jocks, Nerds, Goths). - Assignment: Every student sits at the table they are most similar to.

- Update: The "center" of the table shifts to be indeed in the middle of everyone sitting there.

- Repeat: People move tables if the center shifts away from them.

- Eventually, everyone settles down.

Interactive Demo: The Party Mixer

The Party Mixer

Watch 3 groups form automatically

You have to tell the algorithm K (how many groups you want).

- K=2: Two big blobs.

- K=10: Ten tiny cliques.

The Code (Python)

from sklearn.cluster import KMeans

# 1. The Sorter

# n_clusters=3 means we want 3 groups

kmeans = KMeans(n_clusters=3)

# 2. Fit (Notice we only give X, no y!)

# The model doesn't know the answers. It finds them.

kmeans.fit(X_data)

# 3. Get the labels

groups = kmeans.labels_

# Output: [0, 1, 0, 2, 1, ...]The Problem

How do you choose K? If K is too small, everything is mashed together. If K is too big, every person is their own group. There is a method called the "Elbow Method" to find the best K, but honestly, often you just guess and see what looks pretty.

Summary

K-Means is like organizing a messy room by throwing similar things into piles. It doesn't know what the pile is (it won't label it "Socks"), but it knows they belong together.

Next up: The engine that powers almost all modern ML. The Blind Hiker.