ML 4: The 20 Questions (Decision Trees)

How to make decisions like a toddler asking 'Why?' repeatedly.

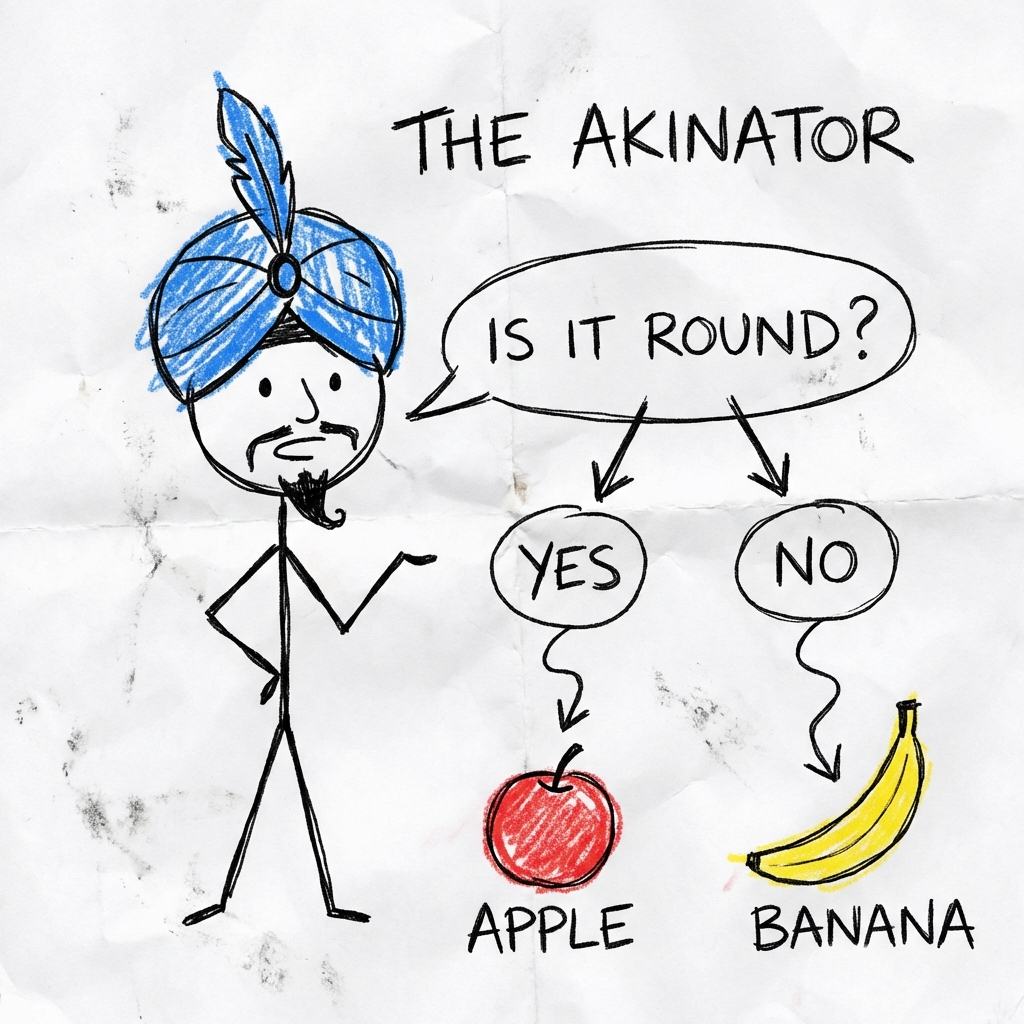

The Akinator

Remember that game "20 Questions"? "Is it a person?" -> Yes. "Is he alive?" -> No. "Is he a scientist?" -> Yes. "Is it Einstein?" -> Yes.

That is literally a Decision Tree.

The Flowchart of Life

A Decision Tree is just a flowchart that splits your data into smaller and smaller groups until it's confident enough to guess.

Imagine you are hiring a developer:

- Q1: Do they know Python?

- No: Don't hire.

- Yes: Go to Q2.

- Q2: Do they copy-paste from StackOverflow?

- No: Suspicious. Don't hire.

- Yes: HIRE IMMEDIATELY.

Interactive Demo: The Splitter

The Fruit Akinator

The tree makes "Cuts" in the data.

- Cut 1: Is

Color == Red? - Cut 2: Is

Size > 5cm?

Unlike the Linear Regression line which is diagonal, Decision Tree cuts are always horizontal or vertical. They are like steps on a staircase.

The Code (Python)

We use DecisionTreeClassifier. It's great because you can actually see why it made a decision (unlike Neural Networks which are black boxes of magic).

from sklearn.tree import DecisionTreeClassifier

# 1. The Question Asker

model = DecisionTreeClassifier(max_depth=3)

# 2. Train on fruit data

# Features: [Redness (0-10), Size (0-10)]

X = [[10, 5], [1, 5], [10, 1]]

y = ["Apple", "Orange", "Cherry"]

model.fit(X, y)

# 3. Predict

print(model.predict([[9, 6]]))

# Output: "Apple"The Catch (Overfitting)

If you let the tree ask infinite questions, it becomes too specific. "Is it a round fruit, red, with a scar on the left side, bought on a Tuesday?" -> "That specific Apple I ate yesterday."

This is called Overfitting. The tree memorized the data instead of learning the rules. We stop this by setting a max_depth (limiting the number of questions).

Summary

Decision Trees are simple, interpretable, and work exactly like your brain when choosing what to eat for lunch. "Is it healthy? -> No. -> Is it tasty? -> Yes. -> Pizza."

Next up: What happens when we plant a whole forest of these trees?