ML 3: The Committee (Neural Networks)

What is a neural network, really? A 3-minute visual guide to hidden layers, why one neuron is dumb but three are AI, and how the math actually works.

The Problem with Lines

In ML 1, we drew a line. In ML 2, we drew a curve. But what if the data looks like a donut? Or a chessboard? You can't cut a donut with a single straight knife cut.

You need something Non-Linear.

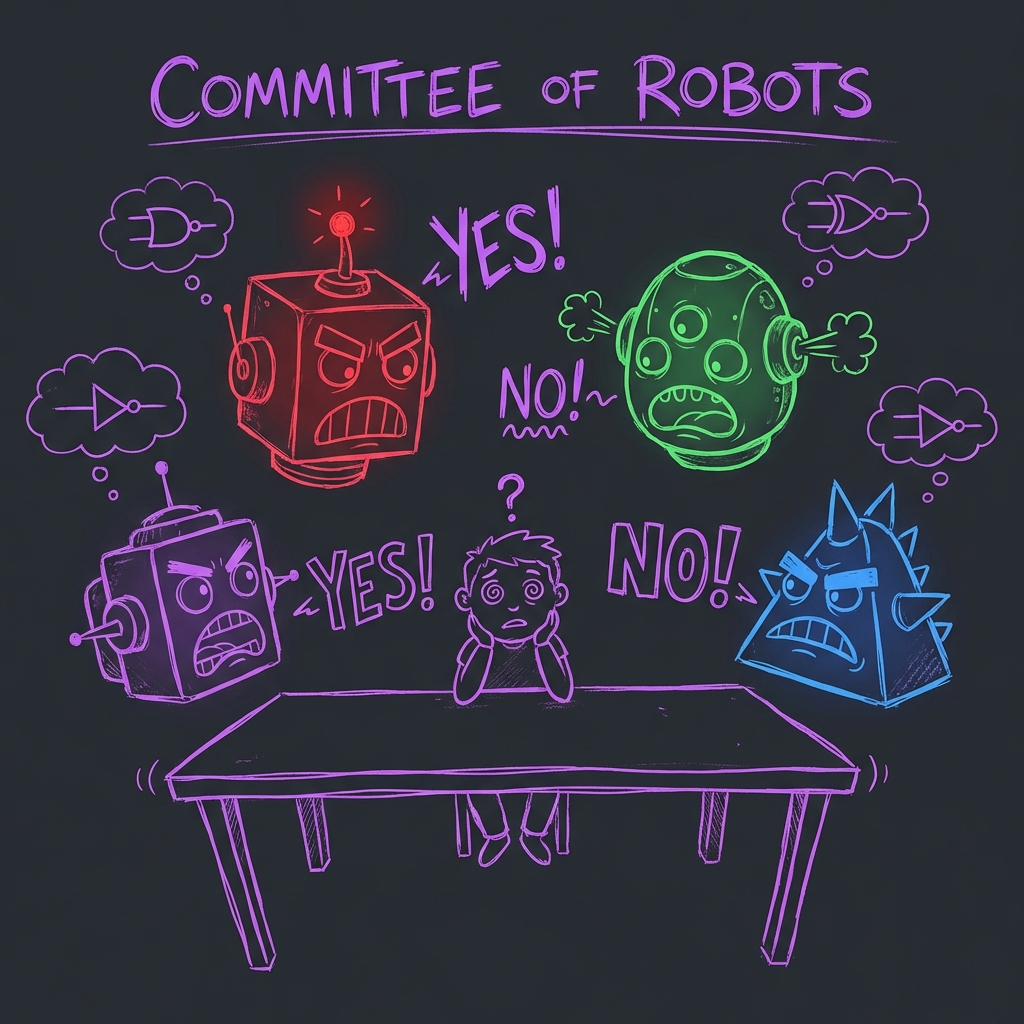

The Committee of Idiots

Imagine you want to decide: "Should I go to the party?" You (the Output Neuron) ask your three friends (the Hidden Layer):

- Grumpy (Neuron 1): "Is there loud music?" (If yes, I vote NO).

- Happy (Neuron 2): "Is there free food?" (If yes, I vote YES).

- Sleepy (Neuron 3): "Is it past 9pm?" (If yes, I vote NO).

None of them see the whole picture. They just draw their own simple lines. But if you listen to all of them, you make a complex decision.

This is a Neural Network. It's just a committee of simple classifiers voting.

Interactive Demo: The Voting Room

Below is a simple Neural Network with 3 Hidden Neurons (Grumpy, Happy, Sleepy). The goal is to classify the Purple Dots (Center) from the Gray Dots (Corners).

A single line can't do this. But three lines working together can carve out a triangle/shape.

Your Goal: Adjust the "Voice" (Weight) of each neuron so the Purple Zone covers the center dots.

The Committee Room

Each "Idiot" (Neuron) draws their own straight line.

Your job is to give them enough Voice (Weight) so their combined voting creates a shape that captures the center dots.

(Weights: Grumpy 0.6, Happy 0.6, Sleepy 1.2)

What is happening?

- Input Layer: Takes in X and Y coordinates.

- Hidden Layer (The Committee): Each neuron draws a straight line (a linear boundary).

- Activation Function (The Logic): We use

Sigmoid(or ReLU) to say "Yes/No" for each line. - Output Layer (The Boss): Sums up the votes. "Grumpy says No, Happy says Yes... okay, result is Yes."

By combining simple straight lines, we created a complex shape.

The Code (Python)

In Python, we use libraries like TensorFlow or PyTorch (or scikit-learn for simple ones).

Here is a "Multi-Layer Perceptron" (MLP) Classifier.

from sklearn.neural_network import MLPClassifier

# 1. The Expert Committee (Neural Net)

# hidden_layer_sizes=(3,) means ONE layer with 3 neurons (Grumpy, Happy, Sleepy)

model = MLPClassifier(hidden_layer_sizes=(3,), activation='logistic', max_iter=2000)

# 2. Train it on donut-shaped data

model.fit(X_train, y_train)

# 3. Ask the committee

prediction = model.predict([[5, 5]])Summary

A Neural Network isn't magic. It's just a lot of simple math equations stacked on top of each other. When people say "Deep Learning", they just mean the committee has 100 layers of management.

Now go tell your boss you understand Deep Learning.