ML 2: The Sorting Hat (Logistic Regression)

How to teach a computer to tell 'Hotdogs' from 'Not Hotdogs'. The magic of the S-Curve.

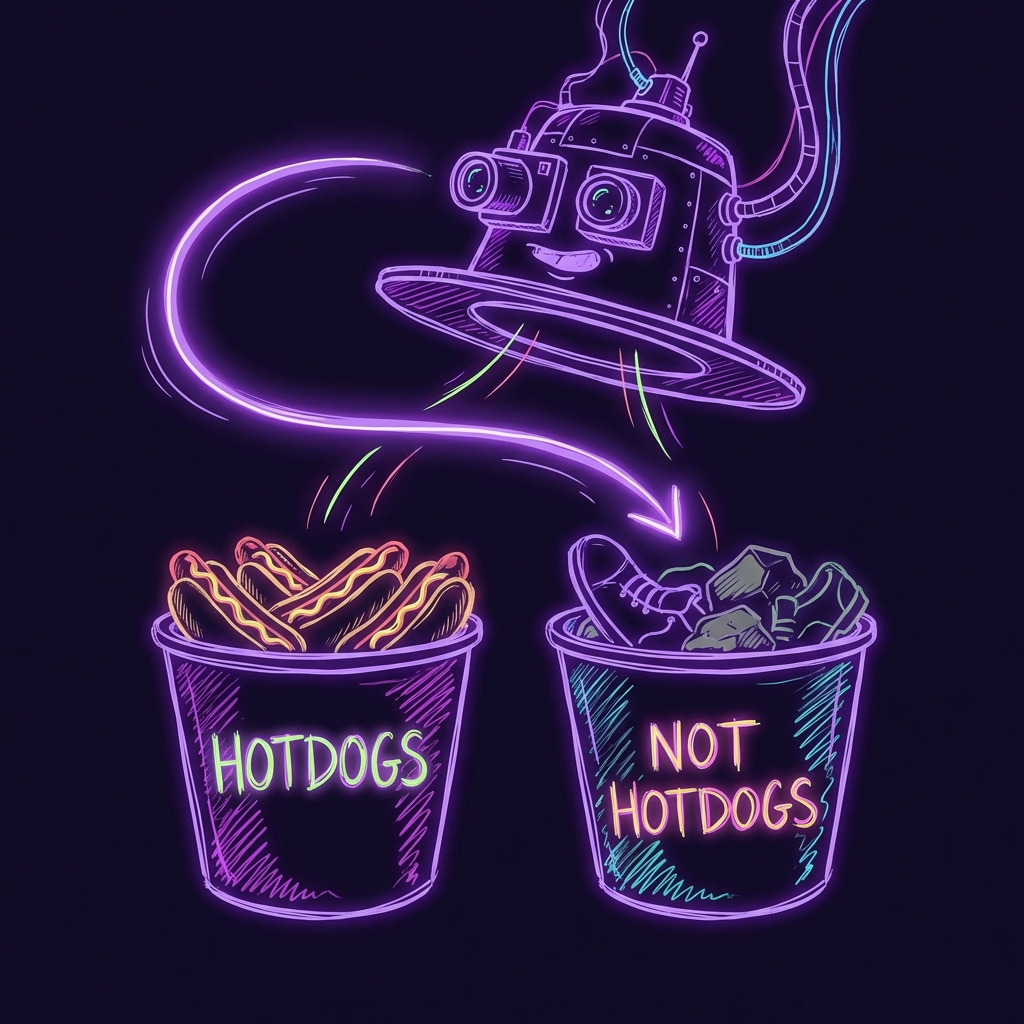

The "Hotdog" Problem

In the last article, we drew a straight line through some pizza data. It was easy. But life isn't always about "How much?" (Regression).

Sometimes, life is about "Which one?" (Classification).

- Is this email Spam or Not Spam?

- Is this tumor Bad or Good?

- Is this a Hotdog or Not a Hotdog? (Silicon Valley reference, anyone?)

The Linear Failure

Imagine you have data points.

- 0 (Blue) = Not Hotdog (e.g., a shoe)

- 1 (Red) = Hotdog

If you try to draw a straight line through them (Linear Regression), it looks stupid. The line goes off to infinity. Does that mean it's 500% Hotdog? No.

We need a way to squash the answer between 0% (No) and 100% (Yes).

Enter The S-Curve (Sigmoid)

We need a function that looks like an "S". It starts at 0, slowly goes up, shoots up in the middle, and flattens out at 1.

Mathematically, it's this ugliness:

But you're an idiot, so just think of it as The Probability Squasher.

Try It Yourself: The Hotdog Detector

I want you to be the machine again. Below are some objects.

- Left (Blue): Shoes, Rocks, Sadness.

- Right (Red): Delicious Hotdogs.

Use the sliders to fit the S-Curve perfectly so it separates them. Your goal is to get the Log Loss Error under 0.20.

The "Hotdog" Detector

What Did You Just Do?

You just trained a Logistic Regression model.

- Weight (Steepness): Determines how unsure you are. A steep cliff means "I AM CERTAIN this is a hotdog." A flat slope means "Eh, maybe?"

- Bias (Position): Shifts the decision boundary left or right.

The Code (Python)

Enough toys. Here is how you do it in the real world with scikit-learn.

import numpy as np

from sklearn.linear_model import LogisticRegression

# 1. The Data (Feature: How red is it?)

X = np.array([[1], [2], [3], [4], [6], [7], [8], [9]])

# 2. The Answers (0 = Shoe, 1 = Hotdog)

y = np.array([0, 0, 0, 0, 1, 1, 1, 1])

# 3. Create & Train

model = LogisticRegression()

model.fit(X, y)

# 4. Predict

# Is a "Level 5 Redness" object a hotdog?

prediction = model.predict([[5]])

probability = model.predict_proba([[5]])

print(f"Is it a hotdog? {prediction[0]}")

# Output: Is it a hotdog? 0 (Probably not) or 1 (Yes) depending on trainingSummary

- Linear Regression: How much? (Line)

- Logistic Regression: Yes or No? (S-Curve)

You now know the two building blocks of basically all AI. Next time, we'll talk about Neural Networks (which is just stacking 1000 of these on top of each other).