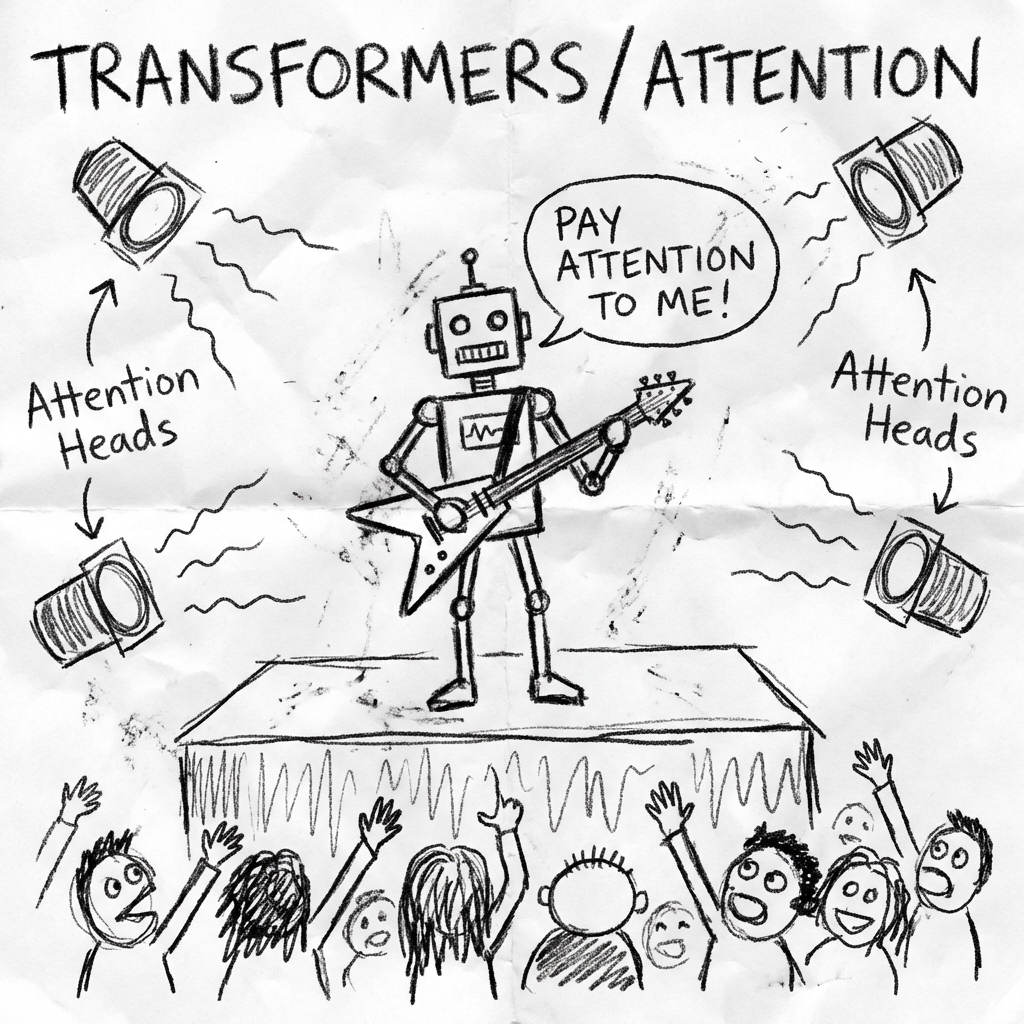

ML 11: The Attention Seeker (Transformers)

What is a transformer? How does attention actually work? The architecture behind ChatGPT explained in 3 minutes with zero matrix multiplication.

The Revolution

In 2017, Google released a paper called "Attention Is All You Need". It changed everything. It killed RNNs. It gave birth to BERT, GPT, and the AI boom we live in.

The Problem with RNNs

RNNs read sequentially. Word 1, then Word 2, then Word 3. Slow. Hard to parallelize.

The Transformer

Transformers read the entire sentence at once. But how do they know which words relate to which?

Self-Attention. Imagine the sentence: "The animal didn't cross the street because it was too tired." What does "it" refer to?

- The Street? No.

- The Animal? Yes.

The Transformer calculates an "Attention Score" between every word and every other word. "It" pays high attention to "Animal". "Tired" pays attention to "Animal".

Self-Attention: Who Looks at Whom?

Click a word to see what it "pays attention" to

💡 "It" pays 90% attention to "cat", it knows "it" refers to the cat!

This is how Transformers understand that "it" = "cat" without reading left-to-right

Embeddings (Word Math)

Words are turned into lists of numbers (Vectors).

King - Man + Woman = Queen

The model learns that "Paris" to "France" is the same connection as "Tokyo" to "Japan".

GPT (Generative Pre-trained Transformer)

GPT is just a giant Transformer stack trained to predict the Next Token. It read the entire internet. It knows probability. It doesn't "know" facts. It knows that after "The capital of France is", the most likely word is "Paris".

Summary

Transformers are the current state of the art. They are massive parallel processing machines that focus on relationships between data points.

Next up: Teaching a robot to walk by giving it cookies.