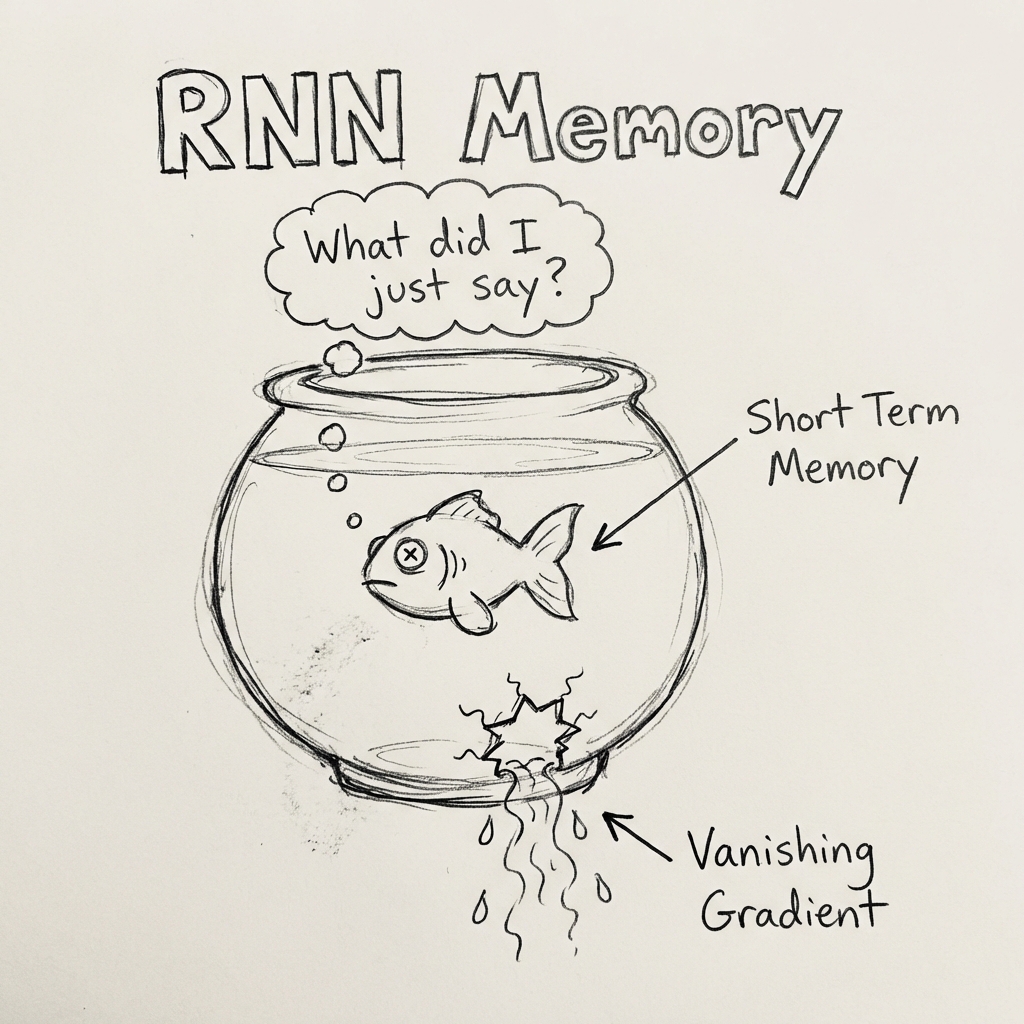

ML 10: The Goldfish (RNNs)

Giving computers a memory (even if it's a short one).

Sequence Matters

Normal Neural Nets have Amnesia. When they look at Input #2, they have completely forgotten Input #1. This is fine for images (a cat is a cat, regardless of the previous photo).

But usually, order matters.

- "I love..." -> (Next word likely: "you", "pizza", "lamp").

- "I hate..." -> (Next word likely: "spiders", "traffic").

You need Context. You need Memory.

The Recurrent Neural Network (RNN)

An RNN is a neural network that loops back on itself. When it processes a word, it passes a little "state" (memory) to the next step. "Okay, the last word was 'Love', so keep that in mind when guessing the next one."

The Goldfish Memory

Watch how RNNs forget earlier words as new ones come in

Try: "the" → "cat" → "sat" and watch early words fade

The Vanishing Gradient (The Goldfish Problem)

Basic RNNs are dumb. They have very short-term memory. By the time they get to the end of a long sentence, they forgot the beginning. "The cat, which was eating the food on the table near the window... was happy." The RNN forgets "cat" and gets confused about "was" vs "were".

LSTM and GRU (The Elephants)

To fix this, we invented LSTMs (Long Short-Term Memory). They have gates:

- Forget Gate: What info is trash? Throw it out.

- Input Gate: What info is new and important? Keep it.

- Output Gate: What should I pass to the next step?

This lets them remember things for much longer.

Summary

RNNs allow AI to read text, understand speech, and predict stock prices (badly). But they are slow. They read one word at a time.

Next up: The modern King of AI that killed the RNN.